- The AI Citizen

- Posts

- Top AI & Tech News (Through April 13th)

Top AI & Tech News (Through April 13th)

🚀 Artemis II | 💰 Billion Dollar Build | 🧬 Defense Predictor

Welcome to another week of defining AI breakthroughs 🚀

With the successful return of Artemis II, humanity has taken a decisive step back into deep space. As we push beyond Earth, intelligence itself must evolve to operate in environments where autonomy, resilience, and real-time decision-making are non-negotiable.

We are beginning to move from artificial intelligence as a tool… to intelligence as an infrastructure for human expansion.

🔍 This Week’s Big Idea: The Rise of AI-Powered Spacefaring Intelligence

Artemis II marks the beginning of a new operating environment for AI. In deep space, systems must function with minimal human intervention, adapt to unpredictable conditions, and make high-stakes decisions in real time.

This accelerates the need for a new class of AI systems:

Autonomous agents that can operate without constant human oversight

Simulation-driven intelligence that can predict and adapt to unknown scenarios

Human-machine systems that extend cognition beyond Earth

Space is the ultimate stress test for AI.

As missions scale—from lunar bases to Mars exploration—AI will become the backbone of navigation, robotics, health monitoring, resource management, and mission planning. The same technologies enabling astronauts to survive and operate in space will redefine industries back on Earth.

For Chief AI Officers, this is not a distant future scenario. It is an early signal of where AI is heading:

From assisting humans… to enabling humanity.

We are entering an era where intelligence must operate across environments, not just across datasets.

How CAIOs should respond:

Adopt a frontier-driven AI strategy.

Most organizations are optimizing AI for current workflows. But the next wave of advantage will come from systems designed for extreme environments—where constraints force innovation.

CAIOs should begin tracking developments in:

Autonomous systems and agentic AI

Simulation and digital twin environments

Human-AI collaboration in high-risk settings

Space-tech and deep infrastructure innovation

These are not niche domains—they are leading indicators of where AI capability is heading.

⭐ This Week’s Recommendation

Run a “Frontier Intelligence Audit.”

Evaluate where your AI systems depend heavily on human input or controlled environments. Then ask:

Where would our systems fail if human oversight was removed?

Which processes require real-time adaptation in unpredictable conditions?

What would change if AI had to operate independently for extended periods?

The answers reveal how prepared your organization is for the next generation of AI.

⚠️ Closing Question to Sit With

If the first era of AI was about optimizing work on Earth, and the next era is about enabling humanity to operate beyond it…

are you preparing your organization for a future where intelligence is no longer bounded by the planet it was built on?

Here are the stories for the week:

Perplexity Launches “The Billion Dollar Build” Startup Competition Around Perplexity Computer

Anthropic Launches Project Glasswing to Secure Critical Software Using Frontier AI

OpenAI Pauses UK Data Centre Project Amid Energy and Regulatory Concerns

DefensePredictor: AI Discovers Hidden Bacterial Immune Systems at Scale

Artemis II Returns Safely to Earth After Historic Crewed Mission Around the Moon

OpenAI Introduces Child Safety Blueprint to Combat AI-Enabled Exploitation

Perplexity Launches “The Billion Dollar Build” Startup Competition Around Perplexity Computer

Perplexity has announced The Billion Dollar Build, an eight-week competition challenging founders to use Perplexity Computer as the primary engine for building a company with a credible path to a $1 billion valuation. The contest, launching April 14, 2026, offers up to $1 million in seed investment shared among up to three winners, plus up to $1 million in Perplexity Computer credits. Participants must build, validate, and demonstrate traction using Perplexity’s multi-agent AI system, which can reason, research, code, delegate, and run workflows autonomously.

The competition reflects Perplexity’s broader push to position Computer not just as a productivity tool, but as a company-building platform. Finalists will pitch live in June, showing both their product and how Perplexity Computer powers its operations. By tying funding directly to AI-native execution, Perplexity is betting that the next generation of startups may be built with dramatically smaller teams and much higher automation leverage. Source: Perplexity Fund

💡 Why it matters (for the P&L):

This is a signal that AI is moving from workflow assistance to venture creation infrastructure. If startups can build with 2 people plus autonomous systems instead of 20-person teams, the economics of company formation, operating margins, and speed to scale could change dramatically. That has implications not just for founders, but for incumbents competing against AI-native businesses with radically lower cost structures.

💡 What to do this week:

Identify one function in your business that still assumes headcount-heavy execution—operations, research, support, or analysis—and ask whether an AI-native redesign could reduce staffing needs while increasing output. The companies that win in this next phase may not be the ones with the most people, but the ones with the best orchestration of human talent and autonomous systems.

Anthropic Launches Project Glasswing to Secure Critical Software Using Frontier AI

Anthropic has announced Project Glasswing, a major cross-industry initiative bringing together leaders including AWS, Microsoft, Google, Apple, Nvidia, and JPMorgan to secure critical global software infrastructure using advanced AI. At the center of the initiative is Claude Mythos Preview, a frontier AI model with unprecedented coding and reasoning capabilities—already able to autonomously identify and exploit thousands of high-severity vulnerabilities across major operating systems, web browsers, and core infrastructure. In testing, the model has uncovered decades-old flaws missed by human experts and traditional security tools, demonstrating near-expert or superhuman performance in cybersecurity tasks.

Rather than releasing the model publicly, Anthropic is deploying it defensively through partners to detect and patch vulnerabilities at scale. The company has committed up to $100 million in usage credits and additional funding to support open-source security efforts. Project Glasswing aims to establish new industry-wide standards for AI-driven cybersecurity as the threat landscape shifts toward AI-augmented attacks that can operate faster and at greater scale than ever before. Source: Anthropic

💡 Why it matters (for the P&L):

This marks a structural shift in cybersecurity economics. AI is reducing the cost of discovering and exploiting vulnerabilities, meaning attackers can scale faster—but so can defenders. Organizations that fail to adopt AI-native security practices risk exponential exposure as attack surfaces grow and response windows shrink. Conversely, those leveraging AI for proactive defense could significantly reduce breach risk, downtime, and compliance costs—turning cybersecurity from a reactive expense into a strategic advantage.

💡 What to do this week:

Audit your organization’s cybersecurity posture through an AI lens. Identify where vulnerability detection, code review, or threat monitoring still relies heavily on manual processes. Begin exploring AI-assisted security tools and partnerships, particularly for codebase analysis and real-time threat detection. The shift is clear: in an AI-driven threat environment, security teams must scale at machine speed or fall behind.

OpenAI Pauses UK Data Centre Project Amid Energy and Regulatory Concerns

OpenAI has paused its planned Stargate UK data centre project, a multi-billion-pound initiative intended to expand AI infrastructure in north-east England. The project, part of a broader £31 billion UK tech investment push, aimed to strengthen the country’s “sovereign compute” capabilities through partnerships with Nvidia and Nscale. However, OpenAI has stated it will only proceed when “the right conditions” are in place—specifically citing high energy costs and regulatory uncertainty as key barriers to long-term infrastructure investment.

While OpenAI reaffirmed its commitment to the UK as a major research hub, the decision highlights growing friction between national ambitions to become AI leaders and the realities of building large-scale compute infrastructure. Concerns include energy pricing, evolving AI regulation, and unresolved issues around copyright laws for training data. The pause underscores a broader trend: AI expansion is increasingly constrained not by demand, but by the economics and policy environment surrounding compute. Source: BBC

💡 Why it matters (for the P&L):

This is a clear signal that AI competitiveness is now infrastructure-dependent. High energy costs and regulatory uncertainty can directly delay or derail AI investments, impacting innovation timelines and operational scalability. For enterprises, this introduces new strategic risks: where your compute runs—and under what regulatory and cost conditions—can materially affect margins, speed to market, and long-term competitiveness.

💡 What to do this week:

Assess your organization’s exposure to infrastructure constraints. Map where your AI workloads are hosted and evaluate risks related to energy costs, regulatory changes, and geographic concentration. Begin exploring diversification strategies—such as multi-region deployments, alternative compute providers, or energy-efficient architectures—to ensure resilience as AI infrastructure becomes a critical bottleneck.

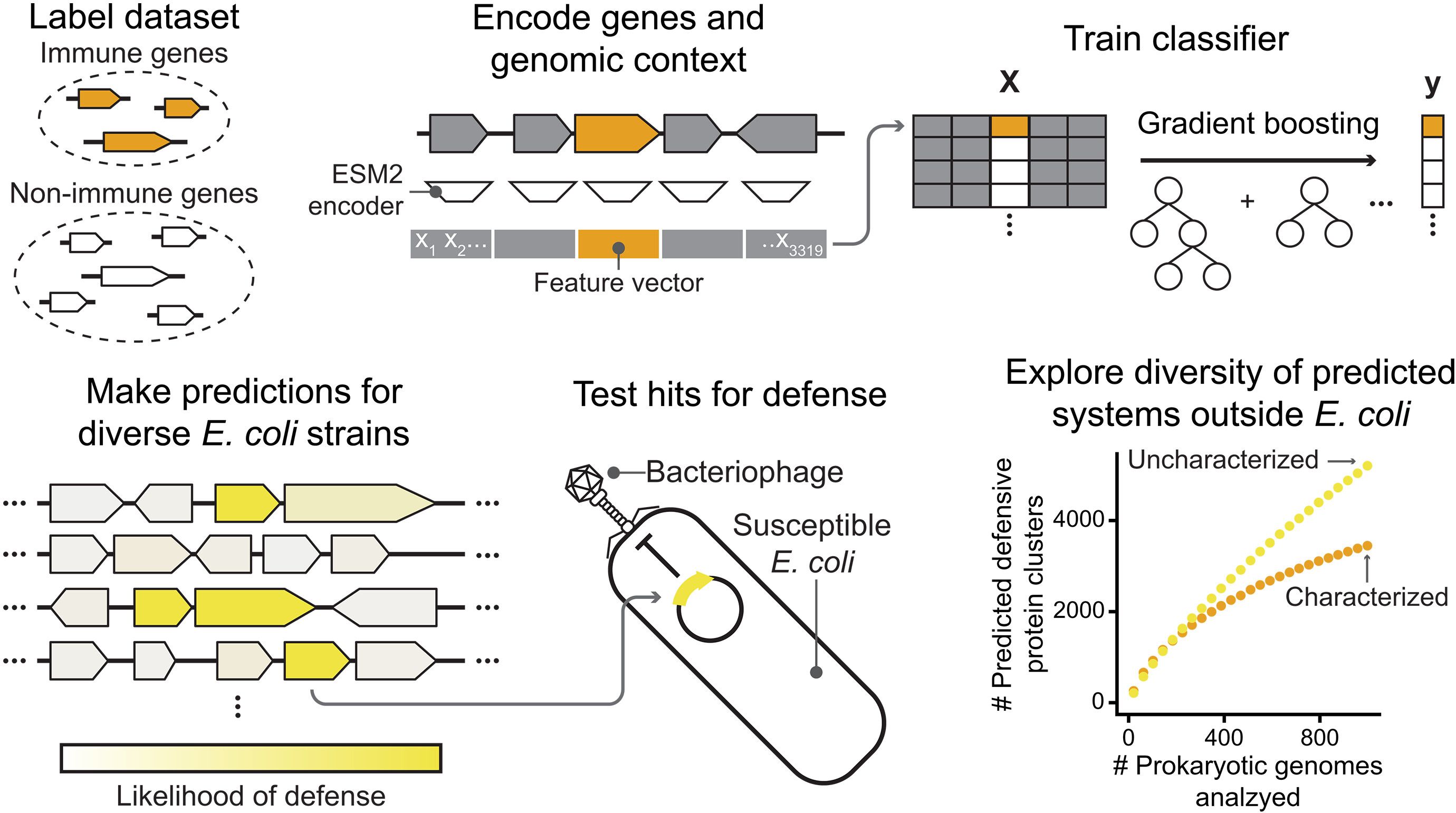

DefensePredictor: AI Discovers Hidden Bacterial Immune Systems at Scale

Researchers have developed DefensePredictor, a machine learning model designed to uncover previously unknown immune systems in bacteria. By combining protein language models with genomic context, the system can identify antiphage (virus-fighting) defense mechanisms across diverse bacterial genomes. When tested on E. coli, the model predicted over 600 potential defense-related proteins—many of which had no similarity to known systems—and experimentally validated 42 new functional immune responses. Expanding the analysis to 1,000 genomes, the model identified thousands of additional candidate defense proteins, revealing that bacterial immunity is far more extensive than previously understood.

This breakthrough highlights how AI is accelerating discovery in fundamental biology. Many bacterial immune systems—such as CRISPR—have historically become powerful biotechnologies. By open-sourcing DefensePredictor, researchers aim to unlock a new wave of innovation across biotechnology, medicine, and synthetic biology by mapping this vast, previously hidden layer of biological defense systems. Source: Science Journal

💡 Why it matters (for the P&L):

This signals a new frontier in AI-driven discovery, where models are not just generating content but uncovering entirely new biological systems. For industries like biotech, pharmaceuticals, and healthcare, this could translate into new drug targets, gene-editing tools, and therapeutic platforms. Organizations that integrate AI into R&D pipelines could significantly reduce discovery timelines and gain a competitive edge in innovation.

💡 What to do this week:

Assess where AI can accelerate discovery workflows within your organization. Identify domains—such as drug development, materials science, or genomics—where large-scale pattern recognition could unlock new insights. Begin exploring partnerships or internal capabilities that combine domain expertise with AI to move from data analysis to breakthrough discovery.

Artemis II Returns Safely to Earth After Historic Crewed Mission Around the Moon

NASA’s Artemis II crew has successfully splashed down off the coast of California, completing a 10-day mission around the moon and back. The four astronauts — Reid Wiseman, Victor Glover, Christina Koch, and Jeremy Hansen — traveled farther from Earth than any humans in history, surpassing the distance record previously set by Apollo 13 in 1970. The mission marked the first crewed lunar journey in more than 50 years and is being hailed by NASA as a major step toward returning humans to the moon’s surface.

The mission also served as a critical systems test for future Artemis flights. NASA officials said the Orion spacecraft performed well overall, though engineers will now inspect the heat shield, service module, and onboard systems to prepare for Artemis III, which is currently slated for next year. Beyond the symbolism, Artemis II demonstrated that deep-space human missions are moving from aspiration back into operational reality. Source: CNN

💡 Why it matters (for the P&L):

Artemis II reinforces that space is becoming an active arena for long-term infrastructure, research, and industrial investment. Human deep-space missions accelerate demand for robotics, advanced materials, simulation systems, autonomy, health monitoring, and communications technologies — many of which have spillover value across defense, healthcare, logistics, and AI-enabled systems on Earth.

💡 What to do this week:

Track lunar and deep-space programs as signals for adjacent innovation opportunities. Identify whether your organization has capabilities — in AI, simulation, autonomy, sensing, materials, or digital infrastructure — that could apply to the growing space economy. The next wave of commercial opportunity may come not just from Earth-based AI, but from the systems needed to support human operations beyond it.

OpenAI Introduces Child Safety Blueprint to Combat AI-Enabled Exploitation

OpenAI has released a Child Safety Blueprint, a comprehensive policy and technical framework aimed at addressing the growing risks of AI-enabled child sexual exploitation. Developed in collaboration with organizations such as the National Center for Missing and Exploited Children (NCMEC) and the Attorney General Alliance, the blueprint focuses on three core priorities: modernizing laws to address AI-generated abuse content, improving industry-wide reporting and coordination, and embedding safety-by-design mechanisms directly into AI systems. The framework emphasizes layered defenses, including detection systems, refusal mechanisms, human oversight, and continuous adaptation to evolving threats.

The initiative reflects a broader industry shift toward proactive governance as AI capabilities scale rapidly. By combining legal, operational, and technical approaches, OpenAI aims to strengthen accountability across the ecosystem and enable earlier detection and prevention of harm. The blueprint is positioned as a collaborative starting point, calling for coordinated action across technology companies, governments, and law enforcement to address one of the most urgent challenges in the AI era. Source: OpenAI

💡 Why it matters (for the P&L):

AI safety is rapidly becoming a regulatory and reputational risk factor. Organizations deploying AI systems will increasingly be expected to demonstrate robust safeguards, particularly in high-risk domains. Failure to implement safety-by-design principles could lead to legal exposure, compliance costs, and brand damage. Conversely, companies that proactively align with emerging safety frameworks can build trust, accelerate adoption, and reduce downstream risk.

💡 What to do this week:

Evaluate your AI systems for safety-by-design readiness. Identify where safeguards—such as misuse detection, human oversight, and reporting mechanisms—are missing or underdeveloped. Begin aligning internal policies with emerging industry standards, especially in sensitive use cases. As regulation tightens, organizations that embed safety early will be better positioned to scale responsibly and maintain stakeholder trust.

About The AI Citizen Hub - by World AI X

This isn’t just another AI newsletter; it’s an evolving journey into the future. When you subscribe, you're not simply receiving the best weekly dose of AI and tech news, trends, and breakthroughs—you're stepping into a living, breathing entity that grows with every edition. Each week, The AI Citizen evolves, pushing the boundaries of what a newsletter can be, with the ultimate goal of becoming an AI Citizen itself in our visionary World AI Nation.

By subscribing, you’re not just staying informed—you’re joining a movement. Leaders from all sectors are coming together to secure their place in the future. This is your chance to be part of that future, where the next era of leadership and innovation is being shaped.

Join us, and don’t just watch the future unfold—help create it.

For advertising inquiries, feedback, or suggestions, please reach out to us at [email protected].

Reply